LLMs for HPO

TLDR -- Combining LLMS and Bayes for Hyperparameter Optimization

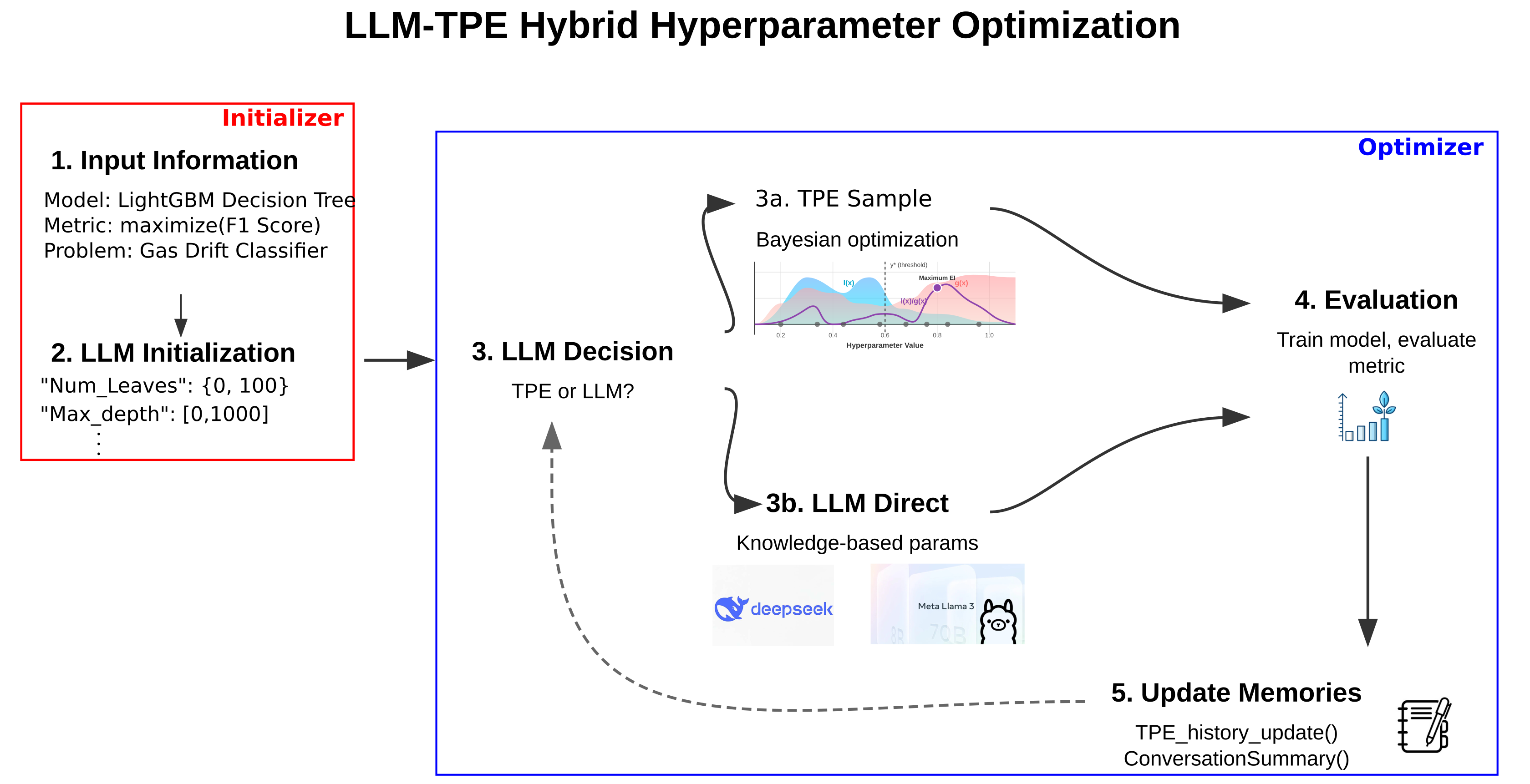

Abstract: A common challenge when training a neural network (NN) is the choice of hyperparameters for the network, e.g., layer count, learning rate, and dropout. We propose combining traditional HPO algorithms with several LLM agents that decide the initialization of the parameter space, exploration of the parameter space, and real-time trial monitoring.

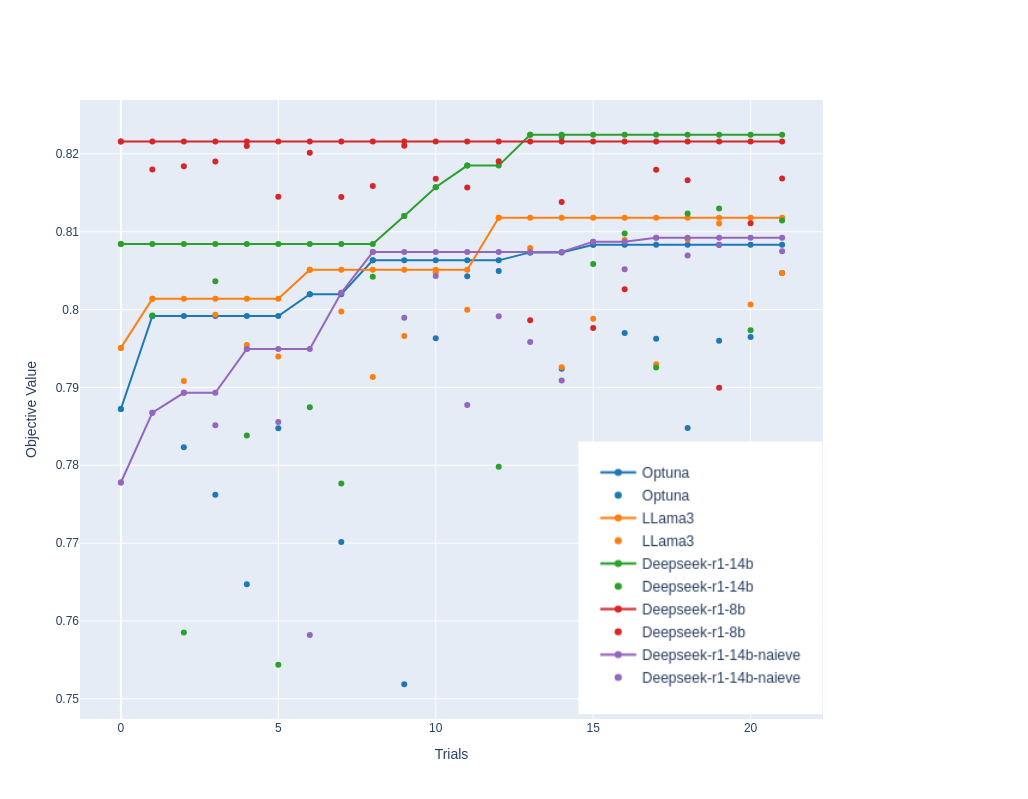

We evaluated several open source LLM models, including Deepseek and LLAMA, with the Optuna Bayesian HPO library.